Some of you may have seen this remarkably popular post by Andrej Karpathy https://karpathy.ai introducing what he calls “LLM Wiki”:

https://gist.github.com/karpathy/442a6bf555914893e9891c11519de94f

It describes an approach to knowledge management that involves three elements:

* Storing notes as Markdown files in a Github repository

* Updating those notes via an LLM that modifies the files on disk directly

* Browsing and reading the notes via Obsidian by pointing it at the Markdown repository

The role of Obsidian in this approach is very familiar to TiddlyWiki users: making a folder full of text files accessible as a wiki.

That raises the possibility of being able to substitute TiddlyWiki for Obsidian in the LLM Wiki approach, meaning implementing some degree of interoperability with Obsidian. Users would be able to choose between Obsidian and TiddlyWiki, or combine them. The goal is, obviously, to drive adoption of TiddlyWiki.

You can see where I’ve got to in this repository. Note that the readme is trying to make a case for LLM Wiki users rather than TiddlyWiki users:

https://github.com/Jermolene/twillm/

Andrej’s workflow depends upon one Obsidian feature: it continually monitors the Markdown files on disk, and loads any updates dynamically made by the LLM. I’ve made a PR to implement that feature, see https://github.com/TiddlyWiki/TiddlyWiki5/pull/9806.

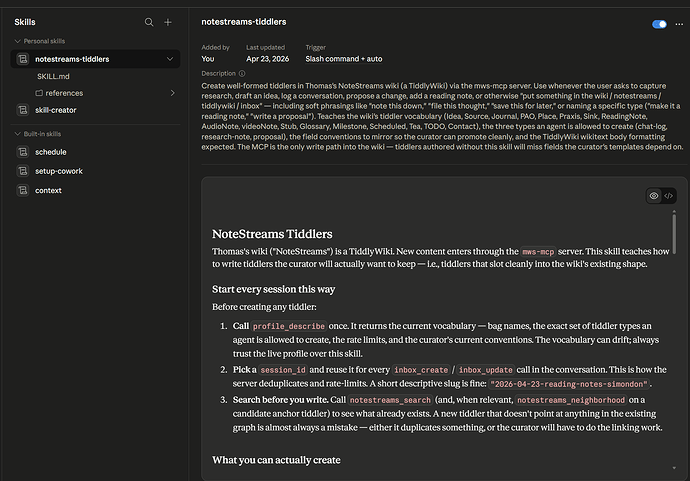

I should add that I started this journey with pmario’s MCP server. I think it is a better foundation for Karpathy’s original work than direct file access. For example, it allows for strategies to limit token consumption. I ended up moving away from MCP because I wanted to try to make TiddlyWiki a direct drop-in replacement for Obsidian, allowing existing users to maintain their existing mental model. I would, however, like to add back the MCP server as soon as possible, assuming we can do so without compromising LLM Wiki compatibility.

The window of opportunity to attract attention might be quite short, particularly if Obsidian were to implement LLM access themselves, perhaps by implementing an MCP server, the opening would close.

It would be very helpful to have help with testing, and in spreading the word about this

I should add that personally, there is no world in which I would send my personal notes to Anthropic, OpenAI or any other cloud service. I have been experimenting with running Qwen models on my Mac using Ollama. Their capabilities are far behind those of commercial providers, but they can still be useful.

I would be delighted to hear your thoughts and suggestions, and of course to accept pull requests.